Post mortem

What did we learn?

Our many customer conversations served as a valuable reality check to our initial assumptions. Here are some key takeaways for my team, and some of my personal thoughts:

Customer needs are diverse

A common oversight in UX is to treat personas as monolithic groups. But even within a well-defined user group, like the specialized engineers in this project, significant variations in work styles and preferences quickly dispel that misconception. The challenge of accommodating such a diverse range of needs within our user base became a balancing act.

Not speaking directly with your customers can lead to products that miss the mark

I empathize with the difficult situation our management team faced in dealing with our most important customers, a group of engineers at BMW and Audi who, if we’re being honest, didn’t really want to work with us. However, relying solely on a list of requirements presented a limitation. Ideally, a deeper understanding of their goals, needs, and pain points would have allowed us to explore solutions that addressed their specific challenges and create more positive outcomes. I’d love to tell a story about how UX and product rose above this challenge and delighted our customers, but our only opportunity for engagement was a single four-hour phone meeting where our participation was restricted (muted and unable to speak). The entire first hour of the meeting, the engineers angrily complained about our product. We had let them down. While this experience wasn't ideal for product development, it did serve as a valuable lesson in the importance of open communication and user-centered design.

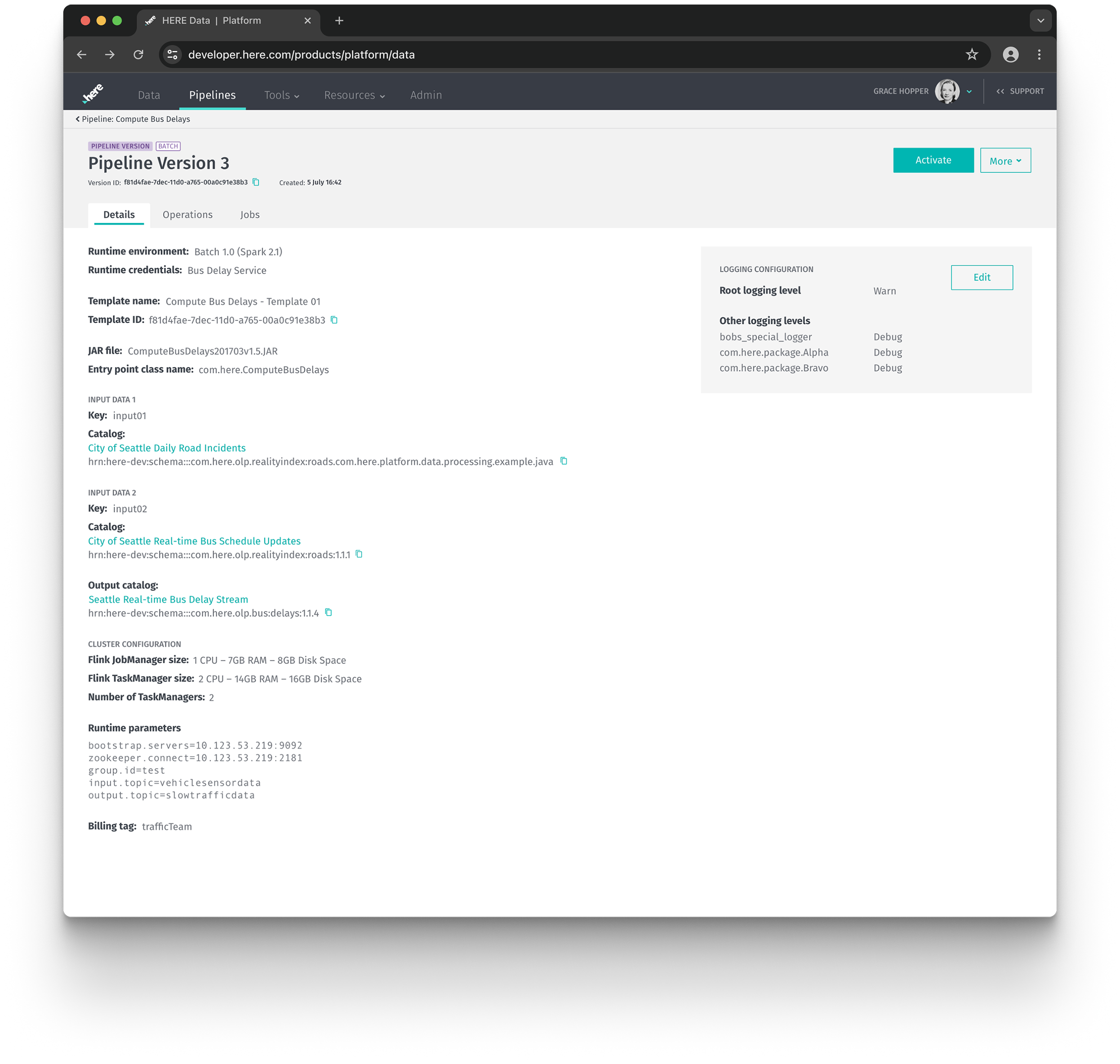

Don’t skip contextual interviews

I’m a big fan of sitting with users at their desks and watching them work. However, because of the logistics of the HERE Berlin office, where many of our internal customers work, a lot of our early interviews were in conference rooms. In those early interview sessions, the data engineers we spoke with primarily focused on the development aspects of their workflow, encompassing ‘research’, ‘coding’, and ‘deployment’ phases. The larger significance of maintaining operational data pipelines within their daily tasks only became apparent later, when I was finally able to conduct contextual interviews, observing engineers directly at their desks. This experience highlights a valuable lesson: we can’t rely solely on users to articulate their entire workflow. Contextual interviews, where users are observed in their natural environment, are a great method for uncovering these valuable details.